-

Články

Top novinky

Reklama- Vzdělávání

- Časopisy

Top články

Nové číslo

- Témata

Top novinky

Reklama- Videa

- Podcasty

Nové podcasty

Reklama- Kariérní portál

Doporučené pozice

Reklama- Praxe

Top novinky

ReklamaGuidelines for Reporting Health Research: The EQUATOR Network's Survey of Guideline Authors

article has not abstract

Published in the journal: . PLoS Med 5(6): e139. doi:10.1371/journal.pmed.0050139

Category: Guidelines and Guidance

doi: https://doi.org/10.1371/journal.pmed.0050139Summary

article has not abstract

Introduction

Scientific publications are one of the most important outputs of any research, as they are the primary means of sharing the findings with the broader research community. The quality and relevance of research is mostly judged through the published report, which is often the only public record that the research was done. Unclear reporting of a study's methodology and findings prevents critical appraisal of the study and limits effective dissemination. Inadequate reporting of medical research carries with it an additional risk of inadequate and misleading study results being used by patients and health care providers. Patients may be harmed and scarce health care resources may be expended on ineffective health care treatments through such inadequate reporting. There is a wealth of evidence that much of published medical research is reported poorly [1–12]. Yet a good report is an essential component of good research.

Reporting guidelines, such as the CONSORT (Consolidated Standards of Reporting Trials) Statement for reporting the findings of randomised controlled trials [13], can lead to important improvements in the quality and reliability of published research. Since the development of the CONSORT Statement in 1996, several other guidelines have been developed relating to other types of research studies. Examples include QUOROM (for meta-analyses of randomised trials) [14], STARD (Standards for Reporting of Diagnostic Accuracy Studies) [15], STROBE (Strengthening the Reporting of Observational Studies in Epidemiology) [16], and REMARK (Reporting Recommendations for Tumour Marker Prognostic Studies) [17].

At present, no coordination or focused collaboration in the development of reporting guidelines exists as there is in, for example, the clinical practice guidelines field. Guideline development methods vary greatly. Dissemination and implementation of reporting guidelines relies mostly on passive publication of the guidelines, occasionally accompanied by editorials. Reporting guidelines are not routinely used on a large scale, and their potential is not being fully realised.

Summary points

-

High-quality reporting in scientific publications is crucial for dissemination and implementation of research findings.

-

Reporting guidelines, such as the CONSORT Statement, can improve accuracy and reliability of research reports, providing that they are themselves developed to high standards.

-

This survey of developers of 37 generic reporting guidelines showed that: development methods were broadly similar but varied in important details; development usually took a long time; only half of the guideline developers had strategies for dissemination and implementation of their guidelines; and securing sufficient funding to develop, evaluate, and disseminate guidelines was a major problem.

-

There is a need to harmonise methods used in the development of reporting guidelines and concentrate more on their active promotion, implementation, and evaluation.

-

The EQUATOR Network (http://www.equator-network.org/) is a new initiative that aims to improve the quality of scientific literature. It provides resources and training on reporting of health research and assists in the development, dissemination, and implementation of reporting guidelines.

To remedy this situation, the National Knowledge Service of the UK National Health Service provided funds to set up the EQUATOR Network (Enhancing the Quality and Transparency of Health Research; http://www.equator-network.org/). This new initiative seeks to improve the quality of scientific publications by promoting transparent and accurate reporting. The Network provides resources and training relating to the reporting of health research and assists in the development, dissemination, and implementation of reporting guidelines.

The first project of the EQUATOR Network was to: (1) identify all available guidelines for reporting health research studies and (2) survey the authors of these guidelines to gather details about their development methodology, dissemination and implementation strategies, and problems encountered during those processes. Given sparse information on the benefits of guidelines [18,19], we also asked authors about published and unpublished evaluations of impact. This article reports the findings of our survey.

Methods

Identification of studies.

We identified published reporting guidelines through systematic searches of MEDLINE, EMBASE, The Cochrane Library, CINAHL, PsycINFO, Web of Science, and general Internet resources through a Google search. File S1 shows the MEDLINE search strategy. We also searched reference lists of relevant articles and personal collections of papers. We encountered various terms used for reporting guidance across the literature, such as reporting guidelines, recommendations, and standards. For the purposes of this article we use the term “guideline” without any implication regarding the methodology used for the guidance development.

Our aim was to initially identify all available guidance for reporting of scientific studies in health research. We deliberately set very broad inclusion criteria: any guidelines published in the period 1996 to 2006 with the objective of improving the reporting of research studies relating to health. One reviewer (IS) screened the records and selected potentially relevant papers. These full-text articles were retrieved and evaluated for inclusion by two reviewers (IS and DGA). Any instruments designed for the evaluation of study quality, although closely related to reporting guidelines, were not included.

From the starting point of these very broad inclusion criteria, we then narrowed our selection to include only very broad, generic guidelines on reporting medical research, and to exclude more specialised or narrow guidelines. In total, 37 reporting guidelines met our criteria and were eligible for the survey (File S2).

Questionnaire design.

We aimed to survey the developers of these 37 guidelines in order to gain insight into their development processes. We designed a four-page, 25-item questionnaire covering the main aspects of reporting guideline development: the characteristics of the development group; motivation; guideline development process; dissemination, uptake, and impact of the guideline; funding; and problems experienced during the development process. Where possible we included multiple options and encouraged respondents to tick all answers that applied; some open questions required descriptive answers.

The questionnaire was e-mailed, together with a covering letter describing the purpose of the project, to the corresponding author of the reporting guideline. Two reminders were sent to non-respondents, and when no response was received another author was approached.

Data collection and analysis.

The answers to multiple-choice questions were summarised as percentages of the whole sample; the answers to open descriptive questions were summarised in brief statements. Here we present our aggregate findings.

Our Findings

Characteristics of reporting guidelines identified from the literature.

The 37 eligible guidelines (File S2) were very diverse, ranging from well-known general guidelines for reporting results of specific types of research studies, such as CONSORT for randomised trials [13] and STARD for diagnostic accuracy studies [15], to the first attempts to harmonise reporting of a particular aspect of research, such as the search strategy for systematic reviews [20].

Two of the identified guidelines provided standards for reporting data (MIAME [Minimum Information about a Microarray Experiment] for microarray-based expression gene data [21] and MIAPE [Minimum Information about a Proteomics Experiment] for proteomics experiments [22]).

The CONSORT Statement has probably been the most influential reporting guideline published thus far. In our sample of 37 guidelines, six were extensions of the CONSORT Statement [23–28]; two provided recommendations complementary to CONSORT [29,30]; and ten referred to or claimed to be influenced by the CONSORT Statement development [14–17,31–36].

Survey results.

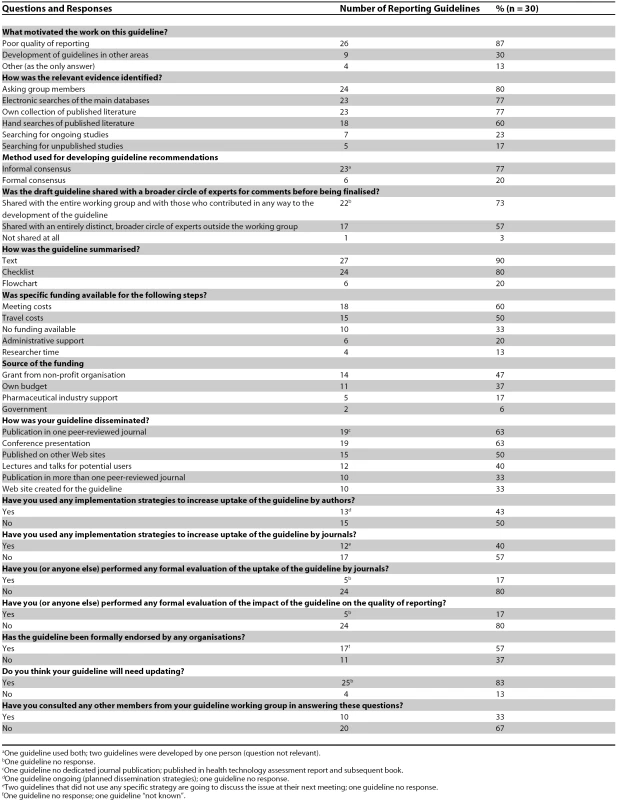

We received responses from 30 of 37 guideline developers (81% response rate). Most respondents answered all questions. Where some questions were left unanswered, this is indicated. Ten respondents (33%) consulted a colleague when completing the questionnaire. Table 1 summarises responses to multiple-choice questions related to basic aspects of reporting guideline development processes.

Tab. 1. Characteristics of Reporting Guideline Development Processes (Summary of Responses to Multiple-Choice Questions)

aOne guideline used both; two guidelines were developed by one person (question not relevant). Guideline development group.

Most of the surveyed guidelines were developed by an international multidisciplinary group (22 of 30; 73%); only two guidelines were developed by one person. The median number of people participating in the guideline development was 22 (range: 1–118). Nineteen groups established a core (writing) group that had a median number of seven people (range: 3–24). The membership of the working groups reflected the guidelines' scope—statisticians, journal editors, clinicians, and epidemiologists were included in most development groups. Some groups included medical writers, social scientists, information specialists, health economists, and representatives of the pharmaceutical industry. One group also invited representatives of grant funding agencies; another group invited a patient representative. The guidelines providing standards for reporting data also included relevant bioinformatics experts and software vendors.

Motivation for the guideline development.

Poor quality of reporting was the most common motivating factor for the guideline development (87%), followed by the influence of other reporting guidelines being developed (30%). Among other contributing factors were difficulties encountered in critical appraisal and systematic reviews; lack of reporting standards in a particular field; and concerns about inappropriate publication processes. Two guidelines were commissioned by professional organisations and one was developed at the request of a medical journal.

Guideline development processes.

The guidelines were developed in various ways. The main activities involved in guideline development are summarised in Box 1. Not all guidelines, however, went through all the stages or proceeded in that order.

Box 1. Steps Used in the Development of Reporting Guidelines

-

Generating ideas/checklist items (through telephone conversations, face-to-face meetings, and guideline topics mandated by professional association)

-

Literature review (systematic review)

-

Critical appraisal of identified evidence

-

Sharing relevant evidence with a larger team

-

Agenda for discussion generating items

-

Discussion of items

-

Agreement on phrasing and explanation of items

-

Writing the first draft

-

Circulation for comments

-

Incorporation of comments

-

Repeating previous two steps until reaching consensus among group

Some of the guideline groups started their work from scratch while others adapted existing guidelines for a new or more specialised research field. All the groups but one made an effort to collect relevant evidence to support the recommendations; some used extensive comprehensive literature searches, while others retrieved the relevant literature in a less formal way (e.g., personal article collections of group members, asking experts in the field). Acquired evidence (the full-text papers or summary statements with extracted data) was shared with the group through e-mail, postal mail, or Web site posting. Most groups (90%) organised one or more face-to-face meetings at various stages of the guideline development: either at the very beginning of the process to generate ideas, or later to discuss pre-prepared evidence summaries or draft guidelines. Meetings usually had both small group working sessions and plenary discussion sessions. Reporting recommendations were mostly developed through informal consensus (77%). Six groups used formal consensus methods (three used a modified Delphi technique, one used focus groups with a modified Delphi technique, and two did not specify the method). Draft guidelines were shared with the entire working group for comment. Seventeen groups (57%) also sought comments on the draft guideline from a distinct, broader circle of experts outside the working group.

Reporting recommendations were summarised in the form of text (90%) and/or checklists (80%; with three guidelines being a checklist only). Six groups (20%) also included a flow diagram and one guideline related to data reporting standards included features specific for this type of report (formal data representation, controlled vocabularies).

On average, the guidelines took 20 months to complete (range: 3–62 months) and another 11 months, on average, to publish (range: 0–30 months).

Twenty-five respondents (83%) felt that their guidelines will need to be updated in the future.

Dissemination, uptake, and impact of the guidelines.

Publication in a peer-reviewed journal and presentation at conferences were the most frequently used means for guideline dissemination. Ten groups negotiated multiple journal publications to ensure wider and quicker spread. The Internet was also used for dissemination (Table 1).

Medical journals played an active role in the dissemination and implementation of one third of the surveyed guidelines by publishing commentaries endorsing the guidelines or providing Web links or citations to the reporting guidelines in their “Instructions to Authors”.

About half of the guideline groups recognised the need to develop implementation strategies to increase the uptake of the guideline by authors and journals (Table 1). Strategies used included publication of supporting articles, letters, commentaries, and editorials; development of a detailed “explanation and elaboration” document; translations; free availability from the Web sites (including journals' sites); inclusion in “Instructions to Authors” of various journals; and organising courses and workshops promoting the use of the reporting guideline.

Solicitation of support from potentially relevant partners, including journal editors, funding agencies, professional organisations and societies, or where relevant, software vendors, was seen as one of the crucial aspects of successful implementation. Active collaboration with journals was recognised in particular—editors were often invited to participate in the development of the guidelines (25 groups, 83%). Some groups also asked for the guideline to be endorsed by the journal and included in its “Instructions to Authors” (six groups, 20%). One group also approached journals to discuss how the guideline could be used efficiently within the editorial process.

Guideline uptake by medical journals was formally evaluated for five guidelines (17%), either by a survey of “Instructions to Authors” and/or by a count of journals that had adopted the guideline. The impact of guidelines on the quality of reporting was evaluated in five cases.

Problems experienced during the guideline development process.

The major problems encountered are listed in Box 2. Lack of sufficient funding and time constraints were identified as the most pressing issues. One third of the guidelines were developed without any dedicated funding (Table 1). Where funding was available, it was provided mostly to cover costs of the meetings and associated travel expenses. Only four groups had secured funding for research-related activities such as searching for evidence, data synthesis, and writing. Seven groups highlighted the consequences of inadequate funding as one of the main obstacles in guideline development.

Box 2. Major Problems Experienced During the Guideline Development Process

-

Lack of sufficient funding

-

Time constraints

-

Work not considered as academic research

-

Lack of evidence on which to base the guidelines

-

Decisions on terminology for the guidelines

-

Reluctance of journals to publish guidelines

-

Working group personnel changes

-

Existence of more than one reporting guideline (in a single area of endeavour)

-

Perceived plagiarism (one group)

A closely related problem was the perception that development of reporting guidelines was not considered as research in some academic institutions. The survey participants also mentioned problems with logistics, such as managing the large number of people involved in the guideline development or assembling the international team with almost no funding.

Discussion

Reporting guidelines have the potential to improve the quality of reporting and consequently the quality of research [18,19]. But such guidelines could conceivably have downsides as well—for example, they might encourage some authors to simply report the information required by the guidance regardless of whether it was actually part of the study's conduct.

Development of reporting guidelines should follow robust methodology if they are to be widely accepted by the relevant scientific and health care publishing community.

Our survey found evidence of a widespread lack of funding for developing guidelines. Some of the national health research funding agencies have begun to recognise this problem. For example, the CONSORT group recently obtained a five-year funding grant from the UK National Health Service's Research & Development Methodology Programme for partial support of its activities, but such funding is rare. Although our survey did not enquire about the exact costs of developing reporting guidelines, we estimate that the costs might be in the region of UK£50,000 for a single appropriately developed consensus reporting guideline. If reporting guidelines are to remain evidence-based, they need to be regularly updated, which also requires funding. As yet, only one guideline team (the developers of CONSORT) have published a revision, although 25 (83%) others recognised the need to do so in due course.

When developing reporting guidelines, it is important to include an evaluation component. In our survey, evaluations of the uptake of the guideline by journals and/or evaluation of the guideline's impact on the quality of reporting had been attempted for only eight (27%) guidelines. Even though the CONSORT Statement is widely accepted, only 36 (22%) of 167 journals surveyed by Altman [37] referred to CONSORT in their guidance to authors, and many used ambiguous language in describing what was expected from authors or referred to an old version of the Statement.

Information gathered from the survey shows a need to harmonise methods used in the development of reporting guidelines. It is also important to evaluate the impact of guidelines. Because this type of evaluation research is difficult to fund, such studies might have a better chance of receiving support if they are undertaken collaboratively by a combination of research funders, authors, editors, and publishers. Such collaboration might also reduce the risk of bias inherent in self-evaluations.

In addition to the 37 guidelines we targeted in our survey, we also identified a considerable number of specialised reporting guidelines providing recommendations for reporting specific medical conditions or procedures (see File S1). Although we excluded these specialised guidelines from our survey, we recognise their importance and plan to engage their developers in the EQUATOR Network.

We hope that EQUATOR will act as an “umbrella” organisation, bringing together developers of reporting guidelines, medical journal editors and peer reviewers, research funding bodies, and other key stakeholders with a mutual interest in improving the quality of research publications and research itself. Our goal is to improve the quality and reliability of the medical literature by promoting the transparent and accurate reporting of health research. We will actively promote the use of reporting guidelines among journals, as their effectiveness critically depends on support from editors of influential medical journals.

Poor reporting cannot be seen as an isolated problem that can be solved by targeting only one of the parties involved in disseminating research findings, be it authors, editors, or peer reviewers. A well-coordinated effort, with collaboration between the research and publishing communities, strongly supported by research funders, will likely have a better chance of leading to improved reporting of health research. In turn, better reporting is likely to influence the quality and impact of future research.

Supporting Information

Zdroje

1. ChanAWAltmanDG

2005

Epidemiology and reporting of randomised trials published in PubMed journals.

Lancet

365

1159

1162

2. GibsonCAKirkEPLeCheminantJDBaileyBWJrHuangG

2005

Reporting quality of randomized trials in the diet and exercise literature for weight loss.

BMC Med Res Methodol

5

9

3. HaydenJACotePBombardierC

2006

Evaluation of the quality of prognosis studies in systematic reviews.

Ann Intern Med

144

427

437

4. LatronicoNBotteriMMinelliCZanottiCBertoliniG

2002

Quality of reporting of randomised controlled trials in the intensive care literature. A systematic analysis of papers published in Intensive Care Medicine over 26 years.

Intensive Care Med

28

1316

1323

5. LeeCWChiKN

2000

The standard of reporting of health-related quality of life in clinical cancer trials.

J Clin Epidemiol

53

451

458

6. LongCRNickTGKaoC

2004

Original research published in the chiropractic literature: Evaluation of the research report.

J Manipulative Physiol Ther

27

223

228

7. MallettSDeeksJHalliganSHopewellSCorneliusV

2006

Systematic reviews of diagnostic tests in cancer: Review of methods and reporting.

BMJ

333

413

8. MillsELokeYKWuPMontoriVMPerriD

2004

Determining the reporting quality of RCTs in clinical pharmacology.

Br J Clin Pharmacol

58

61

65

9. PocockSJCollierTJDandreoKJde StavolaBLGoldmanMB

2004

Issues in the reporting of epidemiological studies: A survey of recent practice.

BMJ

329

883

10. RileyRDAbramsKRSuttonAJLambertPCJonesDR

2003

Reporting of prognostic markers: Current problems and development of guidelines for evidence-based practice in the future.

Br J Cancer

88

1191

1198

11. SmidtNRutjesAWvan der WindtDAOsteloRWReitsmaJB

2005

Quality of reporting of diagnostic accuracy studies.

Radiology

235

347

353

12. ThakurAWangECChiuTTChenWKoCY

2001

Methodology standards associated with quality reporting in clinical studies in pediatric surgery journals.

J Pediatr Surg

36

1160

1164

13. MoherDSchulzKFAltmanDG

2001

The CONSORT statement: Revised recommendations for improving the quality of reports of parallel-group randomised trials.

Lancet

357

1191

1194

14. MoherDCookDJEastwoodSOlkinIRennieD

1999

Improving the quality of reports of meta-analyses of randomised controlled trials: The QUOROM statement. Quality of Reporting of Meta-analyses.

Lancet

354

1896

1900

15. BossuytPMReitsmaJBBrunsDEGatsonisCAGlasziouPP

2003

Towards complete and accurate reporting of studies of diagnostic accuracy: the STARD initiative. Standards for Reporting of Diagnostic Accuracy.

Clin Chem

49

1

6

16. STROBE Initiative

2007

STROBE statement: Strengthening the reporting of observational studies in epidemiology.

Available: http://www.strobe-statement.org/. Accessed 21 May 2008

17. McShaneLMAltmanDGSauerbreiWTaubeSEGionM

2005

REporting recommendations for tumour MARKer prognostic studies (REMARK).

Br J Cancer

93

387

391

18. PlintACMoherDMorrisonASchulzKAltmanDG

2006

Does the CONSORT checklist improve the quality of reports of randomised controlled trials? A systematic review.

Med J Aust

185

263

267

19. SmidtNRutjesAWSvan der WindtDAWMOsteloRWJGBossuytPM

2006

The quality of diagnostic accuracy studies since the STARD statement: Has it improved.

Neurology

67

792

797

20. BoothA

2006

„Brimful of STARLITE“: Toward standards for reporting literature searches.

J Med Libr Assoc

94

421

429

21. BrazmaAHingampPQuackenbushJSherlockGSpellmanP

2001

Minimum information about a microarray experiment (MIAME) toward standards for microarray data.

Nat Genet

29

365

371

22. HUPO Proteomics Standards Initiative

2008

The minimum information about a proteomics experiment (MIAPE): Reporting guidelines for proteomics.

Available: http://www.psidev.info/index.php?q=node/91. Accessed 21 May 2008

23. CampbellMKElbourneDRAltmanDG

2004

CONSORT statement: Extension to cluster randomised trials.

BMJ

328

702

708

24. DavidsonKWGoldsteinMKaplanRMKaufmannPGKnatterudGL

2003

Evidence-based behavioral medicine: What is it and how do we achieve it.

Ann Behav Med

26

161

171

25. GagnierJJBoonHRochonPMoherDBarnesJ

2006

Reporting randomized, controlled trials of herbal interventions: An elaborated CONSORT statement.

Ann Intern Med

144

364

367

26. IoannidisJPAEvansSJWGotzschePCO'NeillRTAltmanDG

2004

Better reporting of harms in randomized trials: An extension of the CONSORT statement.

Ann Intern Med

141

781

788

27. MacPhersonHWhiteACummingsMJobstKRoseK

2002

Standards for reporting interventions in controlled trials of acupuncture: The STRICTA recommendations. STandards for Reporting Interventions in Controlled Trails of Acupuncture.

Acupunct Med

20

22

25

28. PiaggioGElbourneDRAltmanDGPocockSJEvansSJW

2006

Reporting of noninferiority and equivalence randomized trials: An extension of the CONSORT statement.

JAMA

295

1152

1160

29. ChangSMReynoldsSLButowskiNLambornKRBucknerJC

2005

GNOSIS: Guidelines for neurooncology: Standards for investigational studies–reporting of phase 1 and phase 2 clinical trials.

Neuro Oncol

7

425

434

30. SpiegelhalterDJMylesJPJonesDRAbramsKR

2000

Bayesian methods in health technology assessment: A review.

Health Technol Assess

4

1

130

31. AronsonJK

2003

Anecdotes as evidence: We need guidelines for reporting anecdotes of suspected adverse drug reactions.

BMJ

326

1346

32. DavidoffFBataldenP

2005

Toward stronger evidence on quality improvement. Draft publication guidelines: the beginning of a consensus project.

Qual Saf Health Care

14

319

325

33. ShiffmanRNShekellePOverhageJMSlutskyJGrimshawJ

2003

Standardized reporting of clinical practice guidelines: A proposal from the Conference on Guideline Standardization.

Ann Intern Med

139

493

498

34. SungLHaydenJGreenbergMLKorenGFeldmanBM

2005

Seven items were identified for inclusion when reporting a Bayesian analysis of a clinical study.

J Clin Epidemiol

58

261

268

35. WagerEFieldEAGrossmanL

2003

Good publication practice for pharmaceutical companies.

Curr Med Res Opin

19

149

154

36. StoneSPCooperBSKibblerCCCooksonBDRobertsJA

2007

The ORION statement: Guidelines for transparent reporting of outbreak reports and intervention studies of nosocomial infection.

Lancet Infect Dis

7

282

288

37. AltmanDG

for the CONSORT Group

2005

Endorsement of the CONSORT statement by high impact medical journals: Survey of instructions for authors.

BMJ

330

1056

1057

Štítky

Interní lékařství

Článek vyšel v časopisePLOS Medicine

Nejčtenější tento týden

2008 Číslo 6- Červená fermentovaná rýže účinně snižuje hladinu LDL cholesterolu jako vhodná alternativa ke statinové terapii

- Berberin: přírodní hypolipidemikum se slibnými výsledky

- Příznivý vliv Armolipidu Plus na hladinu cholesterolu a zánětlivé parametry u pacientů s chronickým subklinickým zánětem

- Alternativní léčebné možnosti u hypercholesterolemie při intoleranci statinů

- Vliv kombinace nutraceutik na remodelaci levé komory srdeční u osob s metabolickým syndromem

-

Všechny články tohoto čísla

- Melanoma: What Are the Gaps in Our Knowledge?

- Adapting the DOTS Framework for Tuberculosis Control to the Management of Non-Communicable Diseases in Sub-Saharan Africa

- Ethical Implications of Modifying Lethal Injection Protocols

- How Might Cocaine Interfere with Brain Development?

- New Medicines for Tropical Diseases in Pregnancy: Catch-22

- Severe Vivax Malaria: Newly Recognised or Rediscovered?

- Subnational Burden of Disease Studies: Mexico Leads the Way

- Guidelines for Reporting Health Research: The EQUATOR Network's Survey of Guideline Authors

- Drug Development for Maternal Health Cannot Be Left to the Whims of the Market

- PLOS Medicine

- Archiv čísel

- Aktuální číslo

- Informace o časopisu

Nejčtenější v tomto čísle- Guidelines for Reporting Health Research: The EQUATOR Network's Survey of Guideline Authors

- Ethical Implications of Modifying Lethal Injection Protocols

- Subnational Burden of Disease Studies: Mexico Leads the Way

- Adapting the DOTS Framework for Tuberculosis Control to the Management of Non-Communicable Diseases in Sub-Saharan Africa

Přihlášení#ADS_BOTTOM_SCRIPTS#Zapomenuté hesloZadejte e-mailovou adresu, se kterou jste vytvářel(a) účet, budou Vám na ni zaslány informace k nastavení nového hesla.

- Vzdělávání